Moving away from traditional AI evaluation methods that focused on memorizing answers, a new era is emerging where AI's true problem-solving capabilities are tested through strategic games.

Does a High Test Score Mean You’re Truly Smart?

Imagine a friend who always gets 100 on every exam. However, when you ask this friend something as simple and flexible as “What should we have for lunch today?” or “It started raining suddenly, what should we do?”, they can’t give a proper answer.

Would we call this friend “truly smart”? We would likely suspect, “Did they just memorize the questions and answers?”

The world of Artificial Intelligence (AI) is currently in a similar situation. Until now, we have graded how smart an AI is using tools called benchmarks (standardized test papers for measuring AI performance). However, voices among experts are growing louder, saying, “We can no longer trust these test scores.” According to Some researchers are rethinking how to measure AI intelligence, current widely used evaluation methods are criticized for being too easy to cleverly exploit or “game” (using tricks to get a high score) rather than showing actual capability. [Source 6]

Why Does This Matter?

Accurately measuring AI’s ability isn’t just about ranking them.

| First, it’s about safety. If we overestimate an AI’s ability and assign it tasks that are too difficult, or conversely, underestimate it and neglect potential risks, unexpected accidents can occur. This is why the National Institute of Standards and Technology (NIST) is focusing on a ‘risk-based approach’ to improve AI measurement science and standards. [Artificial intelligence | NIST](https://www.nist.gov/artificial-intelligence) [Source 10] |

Second, it’s to identify true innovation. According to the AI Index Report 2025, the influence of AI is now penetrating deep into our society, economy, and global governance. PDF Artificial Intelligence Index Report 2025 [Source 16] Determining whether such a critical technology possesses “real” intelligence or is merely a “parrot” mimicking past data is a key question that will shape our future.

Easy Understanding: Shifting from Paper Tests to ‘Soccer Matches’

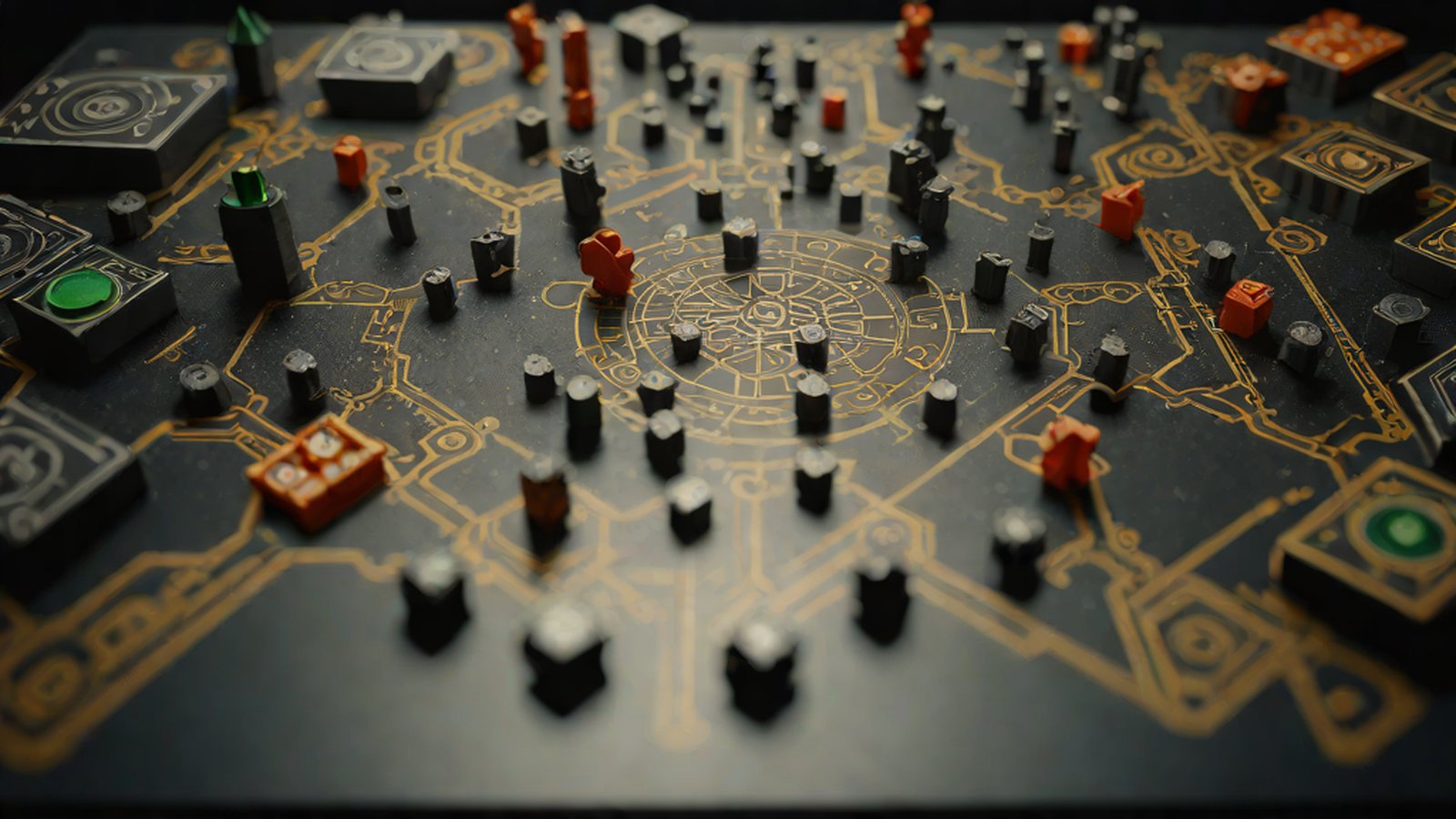

Until now, AI evaluation has been like ‘multiple-choice problem solving.’ There was a set answer, and if the AI got it right, it received points. However, Google DeepMind wants to completely change this paradigm. Their solution is the ‘Kaggle Game Arena.’ Rethinking how we measure AI intelligence [Source 1]

To use an analogy, it’s like saying, “Get out of the paper testing room and go play a soccer match on the field.”

1. Head-to-Head Showdowns

While existing methods involved sitting alone in a quiet room solving fixed problems, in the Kaggle Game Arena, AI models face off against each other. They must read the opponent’s moves and respond in real-time through strategic games. It’s not just about knowing a lot; it’s about exercising ‘wisdom’ to defeat an opponent. Rethinking how we measure AI intelligence - ONMINE [Source 4]

2. ‘Dynamic’ Measurement with No Fixed Answers

Just as you cannot know in advance how an opponent will move in a soccer match, the confrontations on this platform are highly dynamic. Simply put, it’s impossible to memorize the answers beforehand. One can only win by exercising intelligence appropriate to the situation, which allows for a much more verifiable and vivid measurement of AI capabilities. Rethinking how we measure AI intelligence [Source 7]

3. ‘Strategy’ and ‘Resource Management’

It’s not just about the ability to string together plausible sentences. It looks at the process of managing limited resources and setting long-term plans to achieve goals while performing strategic games. This symbolizes a radical shift in AI intelligence benchmarking proposed by Google DeepMind. DeepMind Proposes Radical Shift in AI Intelligence Benchmarking [Source 17]

Current State: Are Human IQ Tests Now ‘Elementary School’ Exam Papers?

We often encounter sensational news like “This AI’s IQ exceeds 150.” However, entering 2025, such simple comparisons have lost much of their meaning. For the latest AI systems like GPT-4o or Gemini 1.5, traditional human IQ tests are no longer appropriate metrics for measuring sophisticated cognitive abilities. Rethinking AI Intelligence Measurement: Why IQ Tests Fall Short for AI … [Source 15]

Furthermore, we often think that AI is running in a single line toward a goal called Artificial General Intelligence (AGI, AI with intelligence equal to or greater than a human’s). However, expert David Pereira points out that this is a misconception. The assumption that intelligence operates along a single dimension (a linear path from narrow AI to general intelligence) has hit its limit. Why “AGI” Is No Longer a Useful Metric: Rethinking How We Measure AI … [Source 2]

To use an analogy, intelligence is not a number that can be ranked like ‘how many centimeters tall someone is,’ but a multi-dimensional ability of ‘how well one can solve complex problems across diverse environments.’

What Lies Ahead?

| Experts are now contemplating new measurements of intelligence that go beyond the ‘Imitation Game.’ Rather than just how perfectly an AI mimics a human, attempts are being made to establish new theories by exploring how actual intelligence manifests. [Beyond the Imitation Game: Rethinking How We Measure General Intelligence | Research Communities by Springer Nature](https://communities.springernature.com/posts/beyond-the-imitation-game-rethinking-how-we-measure-general-intelligence) [Source 9] |

Additionally, as discussed in seminars at Cornell University, new standards for measuring the complexity of information (such as the shift from Entropy to Epiplexity) are being introduced. This is an effort to measure the ‘density of intelligence’ rather than just the ‘amount of knowledge’ an AI possesses. AI-MI Seminar Series: From Entropy to Epiplexity - Rethinking Information for Computationally Bounded Intelligence - The Artificial Intelligence Materials Institute [Source 11]

Ultimately, future AI will be evaluated not based on “what it knows,” but on “how it solves problems and thinks strategically in a changing environment.”

MindTickleBytes AI Reporter’s Perspective

Perhaps we have been overly enthusiastic only about AI ‘report cards.’ We have entered an era where how an AI reached a conclusion and what flexibility it shows in the face of unexpected variables are far more important than the result of getting 100 points.

Attempts like the Kaggle Game Arena are the first steps toward treating and evaluating AI not as simple calculators, but as ‘intellectual partners’ who will live in the world with us. Real intelligence is proven in a world without an answer key. Now, we ask the AI: “Forget the test questions—are you ready to navigate this complex world together?”

References

- Rethinking how we measure AI intelligence

- Why “AGI” Is No Longer a Useful Metric: Rethinking How We Measure AI …

- Rethinking how we measure AI intelligence - ONMINE

- Rethinking how we measure AI intelligence - AiProBlog.Com

- Some researchers are rethinking how to measure AI intelligence

- Rethinking how we measure AI intelligence

-

[Beyond the Imitation Game: Rethinking How We Measure General Intelligence Research Communities by Springer Nature](https://communities.springernature.com/posts/beyond-the-imitation-game-rethinking-how-we-measure-general-intelligence) -

[Artificial intelligence NIST](https://www.nist.gov/artificial-intelligence) - AI-MI Seminar Series: From Entropy to Epiplexity - Rethinking Information for Computationally Bounded Intelligence - The Artificial Intelligence Materials Institute

- Rethinking how we measure AI intelligence - Robotics.ee

- Rethinking AI Intelligence Measurement: Why IQ Tests Fall Short for AI …

- PDF Artificial Intelligence Index Report 2025

- DeepMind Proposes Radical Shift in AI Intelligence Benchmarking

- Measurement costs are too high

- Problems have become too easy or are easy to manipulate

- AI cannot read the questions

- Kaggle Game Arena

- AI Olympics

- DeepMind Chess

- The test papers can only be seen by humans

- It is difficult to properly grasp the capabilities of the latest 2025 AI systems

- AI dislikes numbers