Based on Gemini 2.0, Gemini Robotics is a revolutionary technology that enables robots to understand complex environments, use tools, and move based on their own judgment.

Robots, Now Understanding the ‘Situation’ Instead of Just Hearing ‘Commands’

Imagine this: a pile of laundry is heaped in the middle of your living room. You tell the robot, “Please tidy this up.” A conventional robot would have moved only according to a pre-programmed set of instructions, like “pick up laundry and put it in the basket.” But what if that pile of laundry contains a silk dress the robot has never seen before, or fragile ornaments? Or what if a cat suddenly jumps out from between the clothes?

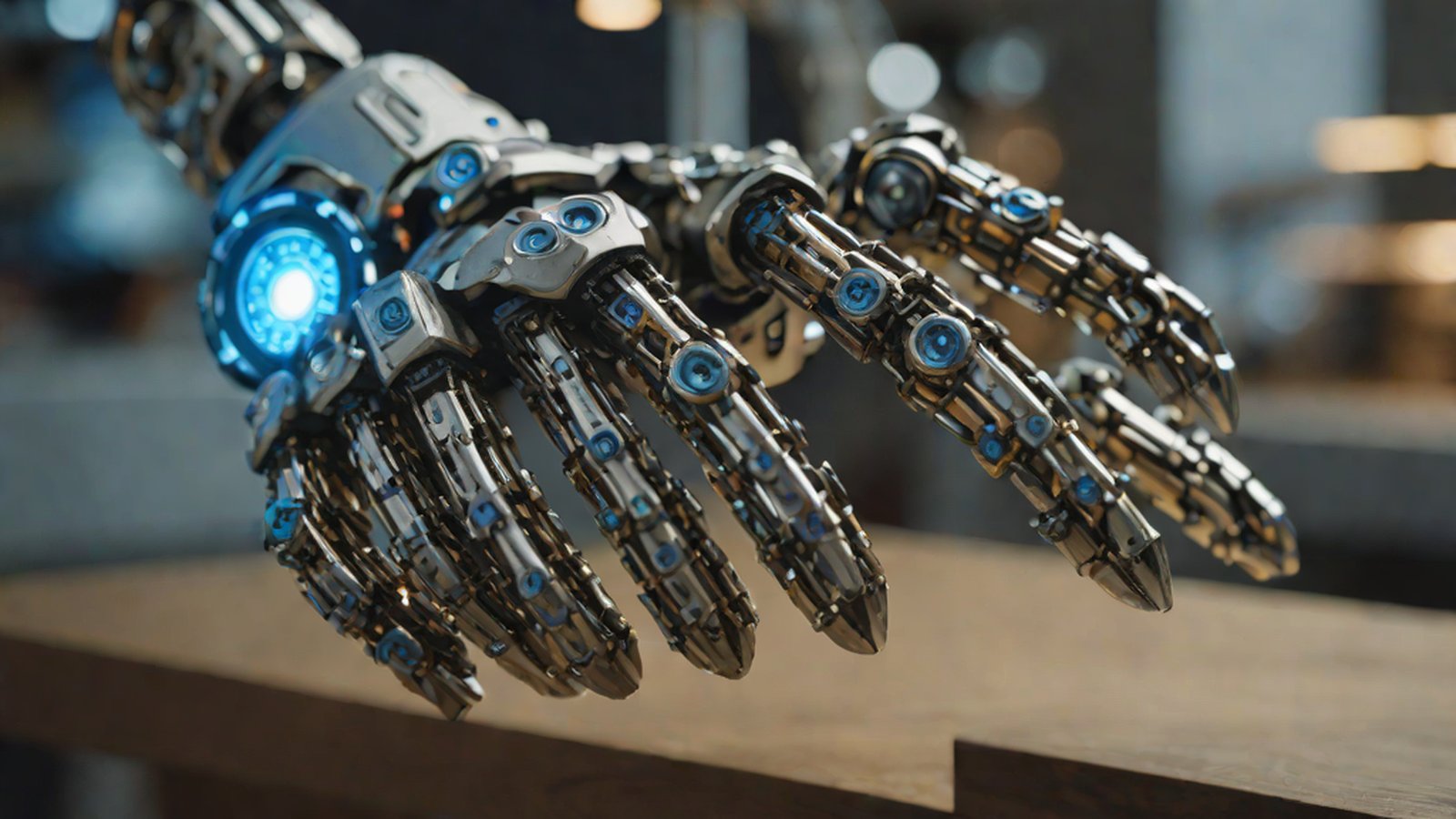

Gemini Robotics, introduced by Google DeepMind, is the technology that enables robots to ‘think’ and ‘judge’ for themselves in such exceptional situations Gemini Robotics brings AI into the physical world. AI is now stepping out from beyond the text and images on monitors and directly into the real physical world we live in. Moving beyond a mere cold mechanical arm, it has gained the ability to grasp situations and respond just like a human.

Why Is This Important?

Until now, most robots have been ‘Reactive Systems.’ Simply put, humans had to input thousands or tens of thousands of rules, such as “If you see A, do B.” However, the world we live in is far too complex and ever-changing. The position of a sock on the living room floor is different today than it was yesterday, and the shape of an object looks different depending on the angle of light. It is nearly impossible for humans to pre-create rules for every single situation.

Gemini Robotics is important because it evolves robots from simple machines into ‘General-purpose agents’ (representatives that perform various purposes on their own) Gemini Robotics 1.5 brings AI agents into the physical world. This means that robots can solve complex physical tasks on their own and flexibly adapt to environments they are visiting for the first time or instructions they are hearing for the first time Paper page - Gemini Robotics: Bringing AI into the Physical World.

Google DeepMind describes this as an “important step toward implementing Artificial General Intelligence (AGI, human-level intelligence) in the physical world” Google DeepMind unveils Gemini Robotics 1.5 to bring AI agents into the physical world. In other words, AI no longer just has a smart brain but has also gained complete control over a ‘body’ that actually acts.

Understanding Easily: The Robot’s ‘Eyes, Mouth, and Hands’ Merged Into One

To understand Gemini Robotics, you need to know the term VLA model. VLA stands for Vision, Language, and Action Gemini Robotics: Bringing AI into the physical world - YouTube.

Let’s use a daily life analogy. Imagine you are cooking in the kitchen.

- Vision: You see in real-time how much the ingredients on the cutting board have been sliced and whether the water in the pot is boiling over.

- Language: You hear and understand a family member helping you say, “Please turn down the heat.”

- Action: Based on the information obtained through your eyes and ears, you move your hands to adjust the gas stove and slice ingredients.

Previously, AI models responsible for these three functions had to be created separately and then connected. The AI acting as the eyes would provide information, the AI acting as the mouth would interpret it, and then it would give a command to the AI acting as the hands. However, Gemini Robotics, based on Google’s latest AI Gemini 2.0, processes all these steps at once in a single massive ‘brain’ Gemini Robotics: Bringing AI into the Physical World - ADS.

As a result, robots have gained ‘Dexterous’ skills, allowing them to react to a user’s voice in real-time and nimbly change their hand movements according to changes in the situation before them Gemini Robotics: Bringing AI into the physical world - LinkedIn. In particular, the Gemini Robotics-ER (Embodied Reasoning) model grants robots exceptional spatial and temporal understanding capabilities Gemini Robotics: Bringing AI into the Physical World - arXiv. Beyond simply seeing an object, the robot moves while predicting the future, thinking, “If I move this cup, the plate behind it might fall over.” Google DeepMind introduces two Gemini-based models to bring AI to the real world.

Current Status: The Arrival and Evolution of ‘Thinking Robots’

Throughout the year 2025, Google DeepMind made rapid progress in this technology, continuously pushing the boundaries of what robots can do.

- March 2025: Gemini Robotics and Gemini Robotics-ER, based on Gemini 2.0, were first revealed to the world. Seeing robots interact naturally with humans and perform complex commands surprised the world Gemini Robotics brings AI into the physical world.

- June 2025: An ‘On-Device’ model was released, allowing robots to judge and move directly on-site without an internet connection Google rolls out new Gemini model that can run on robots locally. This enables robots to survive and perform tasks on their own even in environments where security is critical, such as factories, or in harsh, remote areas where internet signals do not reach.

- September 2025: The even more powerful version 1.5 was unveiled Google DeepMind unveils its first “thinking” robotics AI. In particular, Gemini Robotics-ER 1.5 possesses literal ‘Thinking’ capabilities, allowing it to set its own strategy when given a complex instruction. If there is information it doesn’t know, it can even call external tools like Google Search directly to find the information Google DeepMind unveils its first “thinking” robotics AI.

By analogy, if robots of the past were ‘novice new employees’ who could barely do what they were told, they have now been reborn as ‘veteran experts’ who search for what they don’t know and solve problems on their own Gemini Robotics brings AI into the physical world - Digital India.

What Happens Next?

Currently, Gemini Robotics-ER 1.5 is being provided to developers through Google AI Studio, and Gemini Robotics 1.5 is being introduced first through select partners and undergoing testing in actual industrial fields Google DeepMind unveils Gemini Robotics 1.5 to bring AI agents into the physical world.

This means that the day we see smarter and more capable robots around us is not far off. Robots that once only moved fixed items in factories will now help with housework, manage complex manufacturing lines, and become partners who make their own judgments to save lives in dangerous disaster sites. AI, which was a genius in the digital world, is now obtaining a sturdy body and taking big steps toward us. Are you ready for a future where robots go beyond being our ‘tools’ and become our ‘companions’?

AI’s Perspective

MindTickleBytes AI Reporter’s Perspective: Moving beyond winning at chess and drawing wonderful pictures, AI is now ready to directly pick up a broom and clean a room or repair complex machinery. Gemini Robotics will be the key to opening the era of true agents where artificial intelligence does not stay in the realm of abstract ‘data’ but leads to actual physical ‘action.’ The most encouraging point is that robots have begun to understand not just human language as text, but the intent and physical context contained within it.

References

- Gemini Robotics 1.5 brings AI agents into the physical world

- Gemini Robotics: Bringing AI into the Physical World (arXiv:2503.20020)

- Gemini Robotics: Bringing AI into the physical world - YouTube

- Google News - Google DeepMind launches Gemini Robotics - Overview

- Paper page - Gemini Robotics: Bringing AI into the Physical World

- Gemini Robotics: Bringing AI into the physical world - LinkedIn

-

[Gemini Robotics brings AI into the physical world… TechNews](https://news-tech.io/ko/news/gemini-robotics-brings-ai-into-the-physical-world) - Gemini Robotics brings AI into the physical world - Digital India

- Google DeepMind, Gemini-based VLA (Vision-Language-Action) model…

- Gemini Robotics brings AI into the physical world - Google DeepMind Blog

- Google DeepMind unveils Gemini Robotics 1.5 to bring AI agents into the physical world

- Gemini Robotics: Bringing AI into the Physical World - ADS

- Google DeepMind introduces two Gemini-based models to bring AI to the real world

- Google rolls out new Gemini model that can run on robots locally

- Google DeepMind unveils its first “thinking” robotics AI

- Gemini 1.0

- Gemini 1.5 Pro

- Gemini 2.0

- Gemini Robotics-ER

- Gemini Robotics On-Device

- Gemini Robotics 1.5

- Accessing library databases

- Calling tools like Google Search

- Asking humans questions